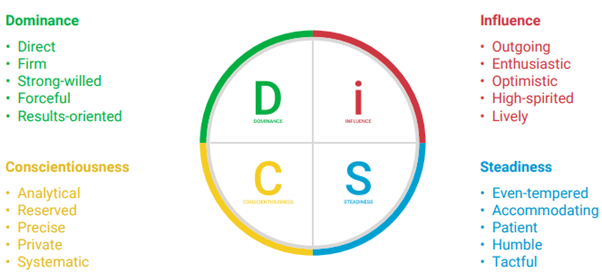

DISC (specifically, Everything DiSC®) is commonly used in organizations worldwide to support self-awareness, communication, team building, and conflict resolution. While not designed as a clinical psychological tool for diagnosing people or measuring traits deeply or broadly, Everything DiSC® is meant to measure and describe observable behavior in the workplace.

As such, it’s designed to be convenient, accessible, and actionable. But how does that translate to reliable and valid measurement? More importantly, how should L&D professionals, facilitators, and tool users think about it?

In this series, we take an objective look at the research around Everything DiSC® published by the tool’s publisher (eg,Wiley, Everything DiSC Manual, Chapter 4) and compare that to some outside information and research on related tools. While critiques of the tool exist, and we will explore some of them, our goal is to cut through the rhetoric. We don’t advocate for or against using Everything DiSC. Instead, we want to provide information on what it DOES measure well (and has evidence for), where it can be useful in organizations, and what it DOESN’T do.

If you want to learn more about how Everything DiSC® works and how to think about both sides of the story when it comes to reliability and validity, read on. We promise not to waste your time and will reference the actual manual throughout.

Everything DiSC Reliability: What the Manual Says

When people participate in workplace assessments for learning and development, they (and facilitators) often expect some level of reliability. Whether it’s taken again in 6 months for a coaching follow-up or next week in a team-building workshop, participants will often discuss their scores on the tool’s scales. As such, it helps to have confidence that those scores are reliable.

The Everything DiSC® Manual dedicates a chapter to reporting research on the tool’s reliability. Throughout this series, we will quote the manual extensively when discussing reliability and validity. In this article, we’ll focus on reliability as reported by the publisher and save some of the controversies around validity for article 2.

The manual reports on two main types of reliability for both the eight scales (Di, i, iS, S, SC, C, CD, D) and the twelve styles on the Everything DiSC® circumplex map.

Everything DiSC® Insights on Internal Consistency

Also known as, “Do the questions on each scale really measure the same thing?” Every scale on Everything DiSC® includes multiple statements (e.g. “I am direct” or “I tend to take the lead”) that respondents must rate as true or false. Internal consistency looks at how closely these statements “hang together.” The manual reports internal consistency using a statistic known as Cronbach’s alpha. Values above .70 are generally accepted as sufficient. Values above .80 are considered very good.

Here’s what they found with two different samples:

- Sample 1 (N = 752): Median alpha = .87

- Sample 2 (N = 39,607): Median alpha = .83

As you can see in these numbers and in Table 4.1 in the manual, every scale is above the accepted threshold with many in the .85–.90 range (e.g., Di = .90/.85, i = .90/.88, S = .87/.82 across samples 1 and 2, respectively), indicating exceptional consistency.

Test-Retest Reliability

Also known as, “Will I get the same scores if I take the assessment twice?” If someone takes Everything DiSC® today and then again next week, will they receive similar scores? A sample of 599 people took Everything DiSC® twice with an interval of two weeks between tests.

For the scales, test-retest correlations were strong:

- Scale correlations range from .85–.88 across the eight scales (e.g. Di=.86, i=.87, SC=.88; see Table 4.2).

- These are above the value often considered “very good” which is .80 (Streiner, 2003, as cited in Everything DiSC® Manual).

For styles, because respondents are assigned a style based on angle (not distance from the center) on the Everything DiSC’s 360° circumplex map, and each of the 12 styles covers 30° of the map, the manual cited change in angle from test to retest as the metric for stability of style assignments.

What they found:

- Median change in angle was 12° (much less than the 90° you would expect if simply assigned a random style each time).

- One third changed by 7° or less. Two thirds changed by 19° or less (see Table 4.3).

- People with longer vectors (higher scores indicating stronger preference toward a style) were more stable than people with short vectors (scores close to the middle of the circle indicating less preference towards any style). The median change for longer vectors was 10° vs. 23° for short vectors. This matches expectations given the theory underlying circumplex models.

The authors conclude: “Taken together, these data suggest that the DiSC scales are stable over repeated administrations. Consequently, test takers and test administrators should, on average, expect no more than small changes when the instrument is taken at different times.”

Put plainly, if you’re using Everything DiSC® in an organization and participants will be discussing their scale scores in a workshop setting, you can reasonably expect that if they take the assessment a few months later for follow-up coaching, they will receive similar scores.

Will they be exactly the same? Probably not, especially if a longer period of time has passed. People’s responses can change as they think about questions differently over time, or if they’re sitting in a different room taking the assessment for the second time. Some small change is reasonable to expect.

Overall, these scores appear quite reliable.

Digging Deeper With Everything DiSC®

Internal consistency and test-retest reliability are just two ways of measuring whether a tool does what it claims to do consistently. Everything DiSC® appears to have strong psychometric properties for measuring individuals’ behavioral preferences in the workplace. Does that mean it will predict job performance? Or that it taps into deep, enduring aspects of your personality? Not necessarily. We’ll dig into some of those questions in article 2 of this series.